Architectural Cause of Delayed Threat Response

Every breach has two clocks running simultaneously. The attacker’s clock starts at the moment of initial access. The defender’s clock starts when something in the environment finally generates a signal worth acting on.

In a legacy SOC, those two clocks are not even in the same time zone.

The CrowdStrike 2026 Global Threat Report puts the attacker’s clock in plain numbers: the average eCrime breakout time — the window from initial compromise to lateral movement — fell to 29 minutes in 2025. A 65% acceleration from the prior year. The fastest observed breakout on record was 27 seconds. In one documented intrusion, data exfiltration had already begun within four minutes of initial access.

The breach lifecycle is a detection architecture problem. Traditional SOCs were built around assumptions about threat velocity that no longer hold.

What Detection Latency Actually Measures

Detection latency is the elapsed time between initial compromise and the moment your team identifies and responds to it.

In practice, it is three compounding failures, and in a traditional SOC, all three are broken simultaneously.

Dwell time is the window between initial compromise and the first indicator that something is wrong.

Alert-to-triage time is the gap between an alert firing and an analyst actually investigating it

Triage-to-containment time is the gap between a confirmed threat and actual containment.

Security Debt: How Rational Decisions Accumulate Into Risk

How does a well-resourced organization end up with a SOC that cannot detect an intrusion for two weeks? Nobody built it to fail.

“Security debt doesn’t just affect performance. It affects trust.”

— ISACA, Security Debt: The Unseen Risk, 2026

The detection rules are left unchanged after a threat landscape shift. The manual workflow is retained because replacing it requires downtime that the team cannot afford. Each defensible in isolation, but accumulated across years, they produce an infrastructure incapable of operating at the speed modern attackers demand.

| Debt Domain | How It Builds | What It Costs You |

|---|---|---|

| Detection Debt | Rules written for yesterday’s threats, never revised | Growing blind spots as the attack landscape evolves |

| Data Debt | Siloed telemetry, inconsistent formats, incomplete source coverage | Correlation that takes hours instead of seconds |

| Process Debt | Manual workflows designed for lower alert volumes | Investigation times that stretch as alert loads grow |

| Talent Debt | Burnout, attrition, and no investment in professional development | Institutional knowledge that walks out the door |

| Architecture Debt | Batch pipelines, inflexible platforms, bolt-on integrations | A foundation that cannot support real-time detection |

Detection latency is the accumulated interest on years of delayed architectural investment.

What Detection Latency Is Actually Doing to Your Organization

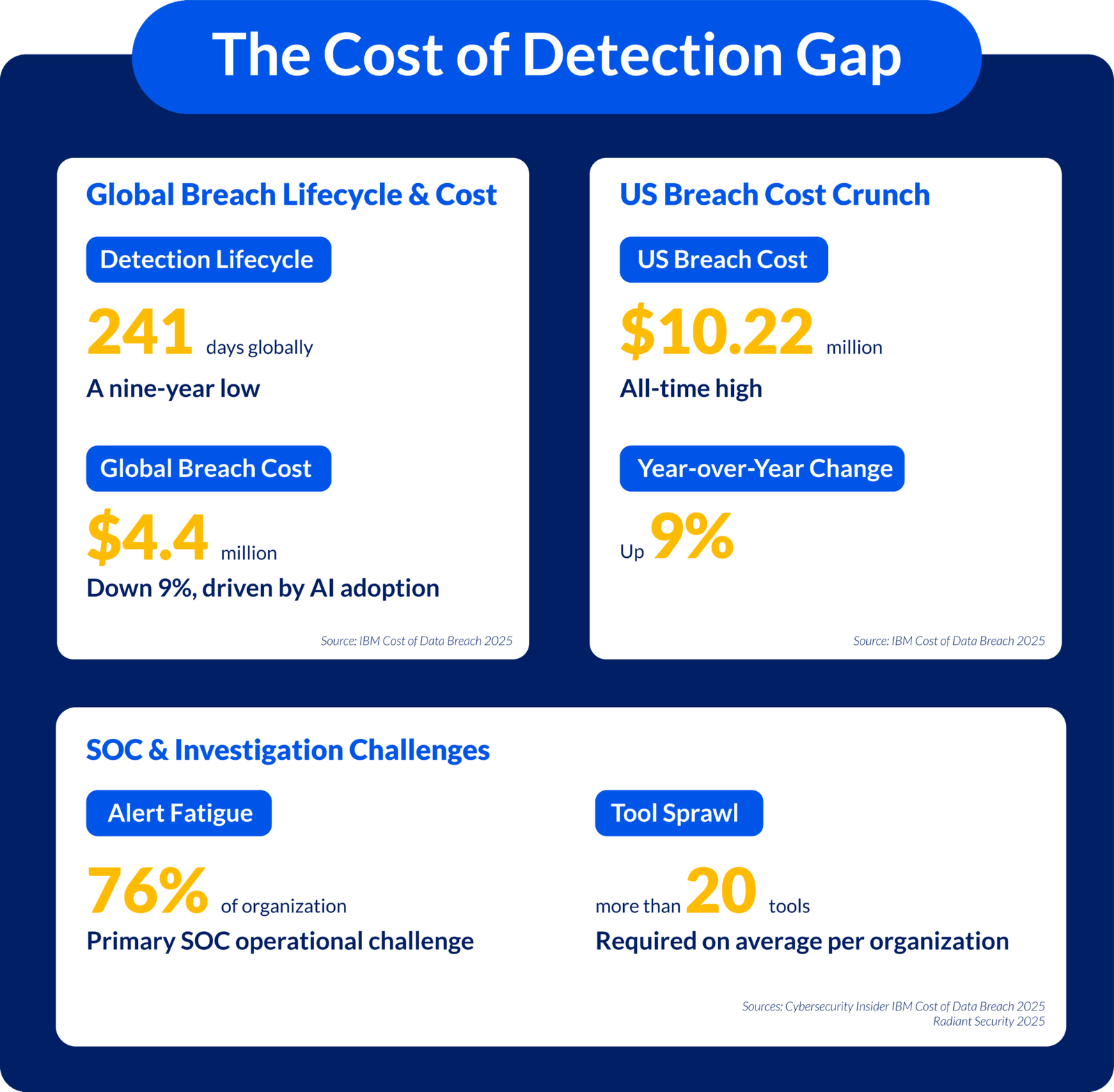

Most organizations discover the true cost of detection latency the same way — after a major incident forces the calculation. The data removes the need to wait for that moment. Taken together, the figures from 2024, 2025, and 2026 describe not a series of isolated statistics, but a compounding system failure: a detection window measured in days, a breach lifecycle measured in months, and an attacker moving in minutes.

Breach Timeline

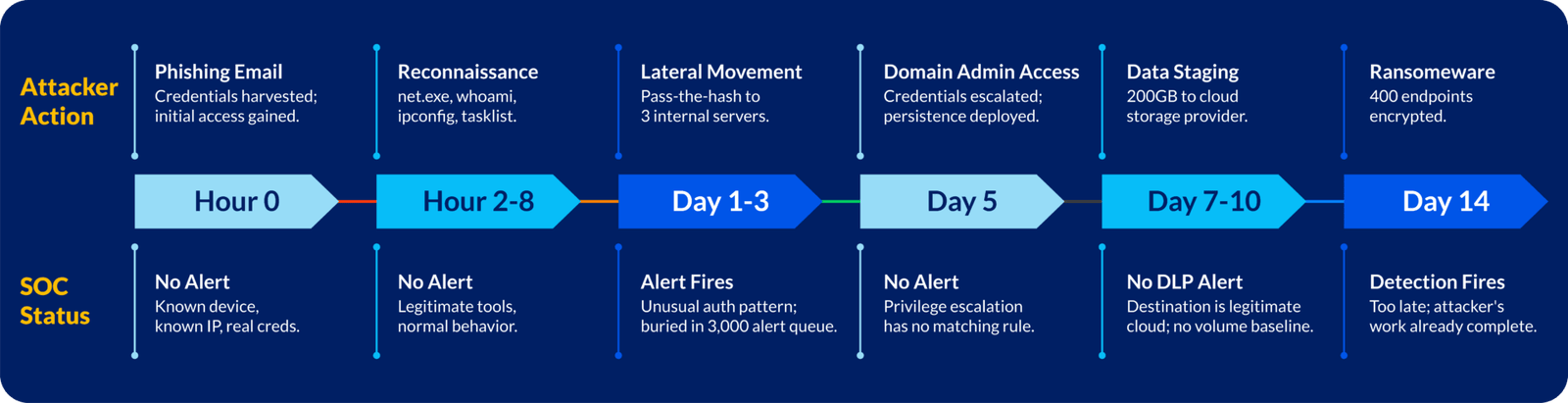

The composite timeline below reflects the typical trajectory of a modern intrusion in a legacy environment. The median for internally detected breaches in 2025 was 16 days. This is not a worst case.

Why This Timeline Repeats

By the time the SOC detects, the work is done. The gap is not a tool problem. Every tool was deployed and operational. The architecture itself is the problem.

A legacy SOC cannot close this gap by adding rules, alerts, or analysts. Fix requires architectural replacement, not patches.

Key Architectural Failures

| Failure | Impact |

|---|---|

| No Behavioral Baseline | Attacker reconnaissance looks like normal admin work |

| Alert Volume Exceeds Capacity | 3,000 alerts per day; 90% never investigated |

| No Real-Time Data Integration | Alerts sit in separate consoles; a manual pivot is required |

| Signature-Based Detection | Rules match known patterns; miss novel techniques |

| Manual Response | Hours between detection and containment |

Why the Architectural Fix Keeps Getting Deferred

Senior security leaders know their SOC architecture is broken. They see the data and feel it in incident response.

Visible Risk vs. Hidden Risk

Replacing an enterprise SIEM touches every log source, detection rule, downstream integration, and operational workflow—while the existing SOC must remain functional. The scale of transition makes indefinite deferral feel responsible.

The risk of standing still with continued breaches, slower detection, and burnout is harder to quantify and easier to ignore.

Sunk Cost Trap

Organizations that spent millions on current platforms face a real barrier to replacement. Instead of rebuilding, they augment incrementally: another module, another integration. The result: obsolete architecture persists while complexity increases and latency remains.

Compliance Hides Detection Gaps

Compliance frameworks measure minimum control baselines, not detection capability. An organization can pass every audit while operating with a detection window measured in months.

When audits pass, leadership confidence rises, and modernization funding gets redirected elsewhere.

Expertise Shortage

Architectural modernization demands data engineering, ML model tuning, and detection engineering at scale—capabilities most enterprise security teams lack in depth. Building them takes longer than a budget cycle.

What Security Leaders Must Do Now

The challenge is establishing an accurate picture of actual risk, distinguishing assurance grounded in evidence from confidence grounded in the absence of known incidents.

Five Questions Worth Asking Now

- When did your SOC last measure its actual mean time to detect — not the theoretical target, but the live operational average?

- What proportion of daily alerts are investigated? What happens to the ones that are not?

- How long does a confirmed threat sit in the queue before containment begins?

- If an attacker used only legitimate tools and credentials, would your current detection rules surface that activity?

- Is your security architecture being retested as your AI and cloud footprint expands — or only at fixed audit intervals?

Closing the Gap: Where to Start

Modernization does not mean replacing everything. It means an honest architectural audit followed by phased changes that fix structural causes, not patch complexity.

Prioritize these five architectural areas.

Centralize Telemetry -Unify all telemetry into a single data lake with consistent normalization. Target: under five minutes from event generation to analyst visibility.

Build Behavioral Baselines – Deploy UEBA to establish dynamic baselines per user, device, and process. Make deviation from baseline—not signature matching—your primary detection trigger.

Automate Tier-1 Triage – Implement SOAR for initial investigation on well-understood alert types. Automated enrichment and deduplication reduce queue volume before an analyst sees it.

Integrate Real-Time Threat Intelligence – Move from scheduled IOC imports to continuous feeds that enrich alerts at triage time, not the following morning.

Measure What Matters – Treat Mean Time to Detect and Mean Time to Contain as live operational KPIs. Supplement with MITRE ATT&CK adversary simulation to close real detection gaps.

Is Your SOC Architecture Causing the Gap?

Detection latency is not a strategic issue. It is an architectural failure that compounds quietly until an incident makes it visible.

Most organizations that modernized did so after an honest audit answered a question their existing tools could not: how long would an attacker actually go undetected?

That question is the beginning.

Book a Latency Detection Assessment

We will benchmark your current mean time to detect, map coverage against MITRE ATT&CK, and identify the highest-leverage modernization points.