The Architecture Was Right, For a Different Era

When the traditional Security Operations Center was designed, the threat landscape had a defining property that no longer holds true: attacks were largely known. Adversaries reused tools. Malware families were cataloged. If you had seen a threat before, you could write a rule for it, and that rule would work for the next variant too.

The entire architecture of the traditional SOC was built on this assumption. Signature databases. Rule engines. SIEM platforms track incoming events against patterns established from previous incidents. The logic was sound: learn from what attackers did and build defenses that recognize it next time.

That logic no longer describes the environment most enterprises operate in.

The traditional SOC was not built for today’s threat landscape. It was optimized for a threat landscape that no longer exists, and the gap between those two landscapes widens every quarter.

Today’s adversaries iterate faster than rule sets can be updated. They test their tools against commercial detection products before deployment. They use legitimate infrastructure, valid credentials, and native operating system capabilities specifically to avoid triggering signatures. The attacks that matter most are the ones that look like normal activity, until they don’t.

A security operation built to recognize yesterday’s attacks is not a security operation. It is a historical record of threats that no longer represent the leading edge of risk.

How Threat Actors Outpaced Detection Models

The shift did not happen overnight. It followed a pattern that, in retrospect, was predictable: as detection capabilities matured, adversaries adapted their techniques to circumvent them. Each generation of attacker behavior was specifically shaped to be invisible to the detection model that preceded it.

Three structural changes that broke the traditional model

- The professionalization of attack tooling – Ransomware-as-a-service, exploit brokers, and access marketplaces lowered the barrier to entry for sophisticated attacks. Techniques once associated with nation-state actors, living off the land, supply chain infiltration, and prolonged low-and-slow reconnaissance, became commercially available. The volume of novel attack patterns multiplied far faster than rule sets could be updated.

- The deliberate abuse of legitimacy – Modern attacks increasingly avoid introducing anything detectable. They use valid credentials. They abuse tools already installed on the target system. They move through the infrastructure that the organization trusts. There is no malicious binary to scan, no command-and-control IP to block, no signature to write, because the attacker has been careful to look exactly like a legitimate user.

- AI-assisted offensive tooling – Threat actors now use large language models to generate convincing spear-phishing at scale, automate vulnerability scanning, and produce novel malware variants that intentionally evade known signatures. The same technology that enables AI-powered defense is being applied offensively, and in many cases, the offense is moving faster.

When attackers can test their tools against the same detection products your SOC relies on, any detection that depends on recognizing what an attack looks like is already behind.

Threat Evolution Timeline: When the Gap Became a Chasm

The table below maps how the dominant threat type in each era corresponds to the detection architecture required to address it, and where traditional SOC capability began to fall short.

| Era | Dominant threat type | Detection approach required | Traditional SOC fit |

|---|---|---|---|

| 2000-2010 | Worms, known malware, script kiddies | Signature matching, AV, firewalls | Strong: threats were cataloged |

| 2010-2016 | APTs, targeted intrusions, and early ransomware | Behavioral heuristics + signatures | Moderate: some gaps appear |

| 2016-2020 | Ransomware-as-a-service, living-off-the-land | Anomaly detection, EDR telemetry | Weak: volume and novelty overwhelm |

| 2020-2024 | Supply chain, identity attacks, double extortion | Correlation across identity + cloud + endpoint | Very weak: surface too broad |

| 2024-present | AI-assisted attacks, deepfake social engineering, and autonomous adversaries | Predictive modeling, behavioral AI, and real-time correlation | Inadequate: architecture mismatch |

The pattern is consistent across every period: as attacks moved from cataloged to novel, from binary to behavioral, and from single vector to multi-domain, the traditional SOCs’ fit degraded, not because of execution failures, but because of architectural ones. The model was not designed for the environment it now operates in.

Critical inflection: The 2024-present row is not a temporary anomaly. AI-assisted offensive tooling, autonomous attack agents, and deepfake social engineering represent structural changes to the threat landscape, not a peak that will recede. The gap between attack sophistication and traditional SOC detection capability will not close without a corresponding architectural shift in defense.

The Detection Gap: What Each Model Actually Catches

The most direct way to understand the gap between traditional and AI-powered detection is to map specific attack types against what each model can and cannot see.

The table below is not a theoretical comparison. Each attack type listed reflects documented enterprise intrusion patterns. The detection outcomes reflect what enterprise security teams have reported in post-incident analysis, not vendor marketing claims.

| Attack type | How it enters | Traditional SOC | AI SOC |

|---|---|---|---|

| Known malware variant | Phishing email attachment | Detected: (signature match) | Detected + contextualized |

| Zero-day exploit | Unpatched edge device | Missed: no rule exists | Anomaly detection flags deviation |

| Slow credential harvest | Valid login, off-hours pattern | Missed: below rule threshold | Baseline drift detected on Day 1 |

| AI-generated phishing | Spear-phish, no prior signature | Missed: no pattern match | Language model anomaly flagged |

| Living-off-the-land attack | Native OS tools are misused | Missed: no malicious binary | Behavioral chain triggers an alert |

| Supply chain compromise | Trusted vendor credential | Missed or detected weeks later | Lateral movement flagged in hours |

The pattern across every novel or behavioral threat is the same: traditional SOC detection depends on prior knowledge of what the attack looks like. AI-powered detection depends on understanding what normal looks like, and anything that deviates is examined, regardless of whether it has been seen before.

Signature-based detection answers the question: Have we seen this before? Behavioral AI answers a different question: Does this make sense? Only one of those questions catches what has never been seen.

Five Real Failures: Same Intrusion, Different Outcomes

Abstract capability comparisons do not convey operational consequences. The failure modes below trace identical intrusion scenarios through each detection model. The divergence in outcomes is not marginal; it is the difference between a contained incident and a disclosed breach.

| Scenario | What the traditional SOC saw | What the AI SOC saw |

|---|---|---|

| Nation-state actor moves laterally for 6 weeks | No alert. Access was authenticated. No rule matched the pattern. | Contextual anomaly on Day 3: a service account accessing systems outside its normal scope. |

| Finance team targeted with AI-generated spear-phish | Email passed spam filters. No malicious payload to scan. No alert raised. | Language behavioral model flagged impersonation patterns in sender metadata on arrival. |

| Ransomware was deployed at 2 am Sunday. | Alert generated. Queued for Monday morning review. Encryption complete before triage. | Automated playbook isolated affected endpoints within 4 minutes of the first file modification. |

| Insider slowly exfiltrates IP over 90 days. | Each individual transfer was below the threshold. No alert triggered across the full 90-day period. | Cumulative behavioral drift detected at Day 11. Volume + timing + destination flagged as anomalous. |

| A compromised CI/CD pipeline injects a backdoor. | No network anomaly. Code signed with a valid certificate. Deployment proceeded normally. | The build process deviated from the established behavioral baseline. Deployment halted for review. |

Across every scenario, the traditional SOC failure was not caused by analyst error. It was caused by the same structural limitation: a detection model that can only recognize threats it has been told to look for. The AI SOC detections were not based on knowing what the attack looked like. They were based on knowing what normal looked like and observing that something had changed.

Why AI SOC Detects What Rules Never Will

The fundamental difference between rule-based and AI-powered detection is not speed or automation. It is the nature of the question each model asks.

A rule engine asks: Does this event match a pattern I have been shown? It is a closed system; its detection surface is bounded by what has previously been written into it. Every rule was written in response to something that already happened. It cannot catch what has never happened before.

A behavioral AI asks, “Is this consistent with how this environment, this user, this system, and this workflow normally behave? It is an open system; its detection surface is bounded by what is normal, not by what is known to be malicious. Anything that deviates from the established baseline is a candidate for investigation, regardless of whether it matches any prior attack pattern.

The four capabilities that change detection fundamentals

- Unsupervised anomaly detection: AI identifies statistical outliers in user behavior, system activity, and data flows without requiring a prior example of malicious behavior. A service account that has never accessed a domain controller at 3 am is a deviation worth investigating, even if no rule exists for it.

- Multi-domain correlation at machine speed: An attacker who moves from phishing email to credential compromise to lateral movement to data staging crosses multiple systems and generates signals in multiple logs. A human analyst correlating those signals manually takes hours. An AI model correlating them in real time takes seconds and can connect signals that no individual analyst would link without the full picture in front of them.

- Longitudinal behavioral modeling: Traditional detection operates at the event level – each alert is evaluated in isolation. AI SOC detection operates at the pattern level – it tracks how behavior evolves over time. The slow exfiltration that looks innocuous on any given day becomes detectable when it is evaluated against a 60-day behavioral baseline.

- Self-improving detection accuracy: Every confirmed true positive and every reviewed false positive improves the model. A traditional SOC’s detection capability is static until someone writes a new rule. An AI SOC’s detection capability compounds – the longer it runs in an environment, the more precisely it understands what normal looks like, and the more accurately it flags deviation.

Rules are written by people who have already seen the attack. Behavioral AI detects attacks that no one has seen yet, because it is looking for deviation, not recognition.

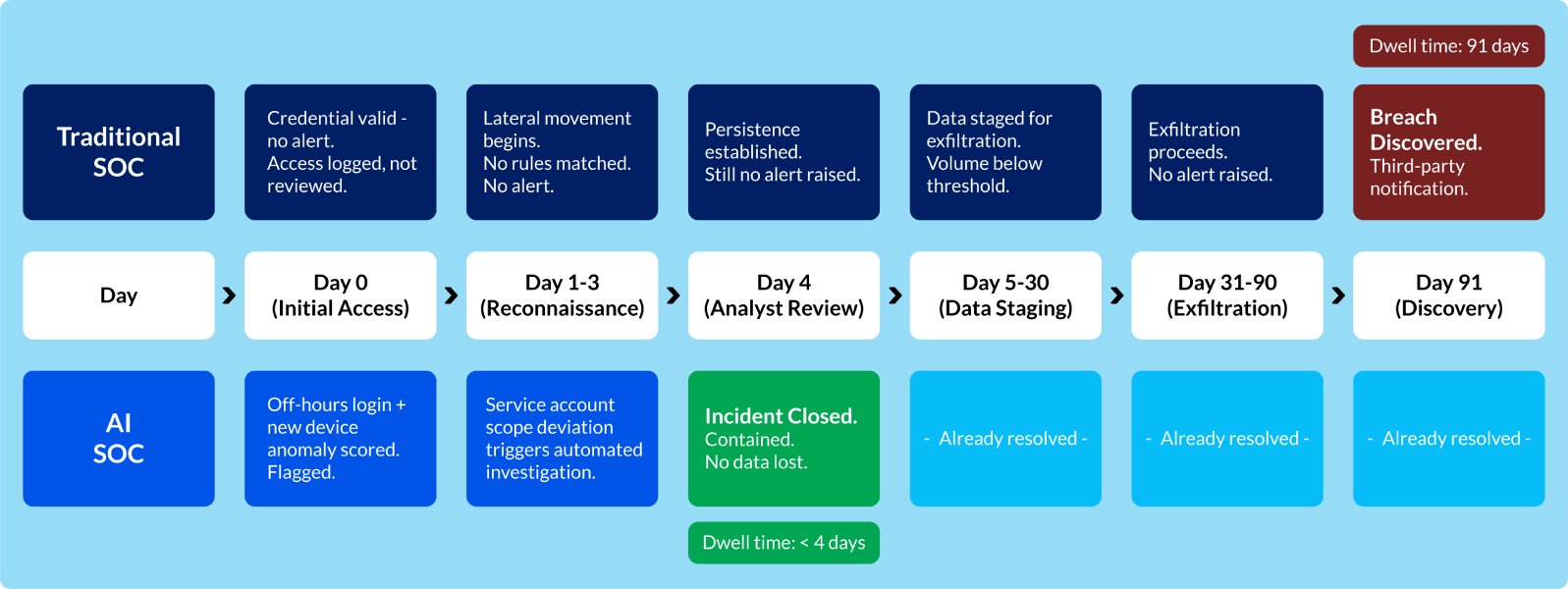

The Dwell Time Problem: A Visual Timeline

Dwell time, the period between initial compromise and detection, is the single most consequential metric in enterprise security. It determines how much damage an adversary can do before they are found. It determines whether a contained incident becomes a disclosed breach.

The timeline below traces the same intrusion through both detection models. The divergence is not a small operational difference. It is the difference between a security incident and a regulatory event.

The dwell time gap is not an outlier. Enterprise post-incident analysis consistently shows that intrusions discovered by internal security teams, rather than by third-party notification or customer complaints, have dramatically shorter dwell times. The architecture that generates that internal discovery is the variable.

Capability Trajectory: Why the Gap Compounds

Perhaps the most important dimension of the traditional vs. AI SOC comparison is not where each model stands today, but where each one is heading. Two systems operating at different capability levels are a manageable problem. Two systems diverging in capability at different rates is a strategic one.

| Capability | Traditional SOC ceiling | AI SOC trajectory |

|---|---|---|

| Detection coverage | Bounded by rules written so far | Expands continuously from new threat data |

| Response speed | Limited by the analyst shift and the triage queue | Seconds for automated response; minutes for complex chains |

| Novel threat detection | Requires a known signature or prior incident | Detects first-ever variants via behavioral deviation |

| Alert fidelity over time | Static: false positive rate does not improve without manual rule updates | Improves – moderately trained on confirmed true/false positives |

| Scale under attack surge | Degrades: analyst capacity is fixed | Stable – AI throughput does not fatigue |

| Cross-domain correlation | Slow: Analyst must pull logs from multiple systems manually | Continuous – AI correlates identity, endpoint, cloud, and network in real time |

The compounding dynamic operates in both directions. An AI SOC’s detection capability improves over time as models train on environment-specific data. The longer it runs, the better it knows what normal looks like. A traditional SOC’s detection capability is effectively static: it reflects the rules that have been written and the analyst capacity that has been hired. Adding more analysts raises the ceiling incrementally. Retraining on new data raises it continuously.

The strategic implication: An enterprise that defers AI SOC adoption is not maintaining the status quo; it is falling further behind. Threat actors are investing in AI-assisted offensive tooling now. The detection capability gap that exists today will be larger in 18 months if the architecture does not change.

What the Shift Demands from Enterprise Leadership

The transition from a traditional to an AI-powered SOC is not primarily a technology procurement decision. It is an architectural one, and it requires the same quality of strategic attention that any foundational infrastructure change demands.

For C-suite leaders who do not manage security day-to-day, the question is not which vendor to select. It is whether the organization’s security operating model is structurally capable of detecting the attacks that are actually being launched against it today, not in 2019.

Three questions that define the strategic position

- What percentage of your current detection capability depends on known threat signatures?

- If an adversary has been inside your environment for 30 days using only valid credentials and native tools, would your current SOC find them?

- Is your security architecture’s detection ceiling growing, or is it the same as it was two years ago?

The answers to these questions define the actual security posture, not the compliance posture, not the audit posture, but the real answer to whether a sophisticated adversary operating inside your environment right now would be detected before the damage is done.

What the transition looks like in practice

- It is not a rip-and-replace: Traditional SOC functions, incident response, forensic investigation, and escalation handling remain necessary. The transition introduces AI as the primary detection and triage layer, not as a replacement for human judgment in complex investigations.

- It is not primarily a headcount reduction: The analyst role changes from primary alert reviewer to high-confidence escalation handler. The value of experienced analysts increases in an AI SOC; their judgment is applied where it matters most, not exhausted on noise.

- It is not a one-time implementation: AI SOC effectiveness compounds over time. Models improve with environment-specific data. Detection accuracy increases as normal baselines become more precisely defined. The investment case is longitudinal, not point-in-time.

The goal is not to detect more alerts. The goal is to detect the attacks that will actually cause damage, including the ones that look like nothing at all until they are deliberately sought.

The window to act is narrowing.

The gap between traditional SOCs and AI-powered SOCs is no longer theoretical; it is operational, measurable, and widening every quarter.

Enterprises today are not dealing with more alerts; they are dealing with fundamentally different attacks, attacks that blend into normal behavior, bypass signatures, and evolve faster than rules can keep up.

The question is no longer whether your SOC is functioning, but whether it can detect what actually matters today.

Organizations that continue to rely solely on rule-based detection are not maintaining stability; they are accumulating invisible risk.

The shift to an AI-powered SOC is not about replacing what exists. It is about closing the detection gap:

- From reactive → predictive

- From event-based → behavior-based

- From delayed response → real-time containment

At Prudent Consulting, we help enterprises bridge this gap with AI-led detection, real-time threat correlation, and modern security architectures.

Explore more:

https://www.prudentconsulting.com/cybersecurity/

Because the reality is simple:

👉 The attacks you already know how to detect are not the ones that will cause the next breach.

👉 The risk lies in what your current SOC cannot see.