The Sprint That Proved the Point

In Q3 2023, a 60-person engineering team at a B2B SaaS company received the results of their annual penetration test. The report identified 23 findings. Eleven were rated critical or high severity.

The engineering leadership team convened an emergency sprint. Two senior developers were pulled from their product roadmap. The sprint lasted three weeks. Seven of the eleven critical findings were remediated. Four required architectural changes took an additional six weeks to scope, approve, and implement.

When the total engineering hours were calculated, developer time, QA re-testing, security review, deployment cycles, and remediation had consumed the equivalent of one full senior developer for an entire quarter. The cost of delayed product features was not calculated, but the roadmap slipped by six weeks.

The penetration test cost $40,000. The remediation cost approximately $180,000 in engineering time alone, before accounting for the delayed product releases. And every one of those vulnerabilities had been introduced into the codebase months earlier, in sprints where fixing them would have taken a developer 15 minutes.

This is not an unusual story. It is the default outcome for engineering organizations that treat security as a post-development activity. The numbers are consistent enough that they form the foundation of a business case, one that engineering leadership, once they see it framed correctly, finds difficult to argue against.

Why Engineering Leadership Resists, And Why the Resistance Is Based on the Wrong Numbers

The standard objections to DevSecOps investment from engineering leadership are consistent across organizations, and they all share a common structure: they frame DevSecOps as an additional cost without accounting for the cost it eliminates.

The three objections and what they are actually saying

- It will slow down our delivery velocity: This objection assumes that adding security checks to the pipeline creates friction. It is correct in the short term. It ignores that the alternative reactive security creates far more significant velocity disruption in the form of emergency sprints, hotfix deployments, and pen test remediation cycles that consume planned capacity unpredictably and at the worst possible time.

- Our developers are not security engineers: This is accurate. DevSecOps does not ask developers to become security engineers. It asks them to receive security findings in the same context they already receive code quality findings as automated feedback in their existing workflow, at the moment the code is written, with specific remediation guidance. The learning curve is a pipeline configuration problem, not a hiring problem.

- We already have a security team for this: The security team triaging production vulnerabilities is the most expensive possible use of security expertise. DevSecOps does not replace the security team; it frees them from reactive triage to do the work that actually requires security expertise: threat modeling, architecture review, red team exercises, and SOC integration.

The objections to DevSecOps are not wrong about what it costs. They are wrong about what it costs compared to. The comparison is not DevSecOps vs. nothing. It is DevSecOps vs. the current cost model, which is already paying for security, just at the most expensive possible stage.

The Actual Cost Model: What Fixing Vulnerabilities Late Really Costs

The foundational number in the DevSecOps business case is the cost multiplier for fixing vulnerabilities at different stages of the software development lifecycle. The research behind this number is consistent across multiple studies spanning two decades of software engineering data, and it represents the single most powerful argument available to anyone making the case to engineering leadership.

The principle is straightforward: the later a vulnerability is discovered in the development lifecycle, the more expensive it is to fix, not linearly, but exponentially. A vulnerability caught in design costs roughly one unit of effort to resolve. The same vulnerability caught after production deployment costs between 45 and 100 times more.

| Where the vulnerability is fixed | Relative cost | Time to fix | Production risk exposure |

|---|---|---|---|

| Design phase caught in requirements | 1× baseline | Hours | Zero – never built |

| Development caught in code review | 6× baseline | Hours to days | Zero – never deployed |

| QA / staging caught in testing | 15× baseline | Days | Minimal – pre-production |

| Production caught by internal scan | 45× baseline | Weeks: regression, redeploy cycle | Active – every hour until patched |

| Production was discovered during the incident | 100× baseline + | Weeks to months: incident response, forensics, rebuild | Realized – breach, data loss, or service disruption |

The multipliers in this table are not theoretical. They reflect the compounding costs of: developer context loss between introduction and discovery, regression testing required after a late fix, deployment cycles in production environments, incident response overhead when vulnerabilities are discovered during exploitation, and the opportunity cost of engineering capacity pulled from product development.

The critical insight for engineering leadership: Every sprint that ships without security gates is not avoiding security cost; it is deferring it at a 15-to-100-times multiplier. DevSecOps does not add a security cost to the engineering process. It moves a cost that already exists to the stage where it is cheapest to pay.

Where DevSecOps Fits in the Engineering Lifecycle

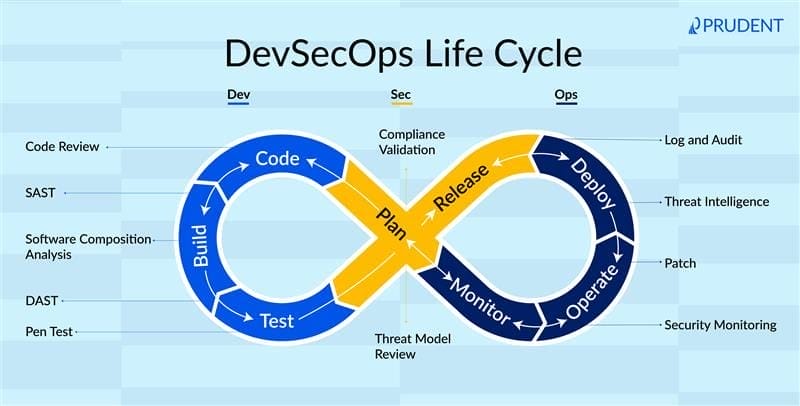

The diagram below maps the security activities that belong at each phase of the engineering lifecycle against the cost multiplier that applies when vulnerabilities are instead discovered at that stage in production. The architecture makes the cost argument visual: every phase to the right of development represents a security finding that costs progressively more to resolve.

Before and After: What Changes When Security Shifts Left

The operational impact of DevSecOps is visible across every dimension of the engineering function, not just in the security metrics, but in the velocity, compliance, and operational cost metrics that engineering leadership actually manages against.

| Dimension | Without DevSecOps | With DevSecOps embedded |

|---|---|---|

| Where vulnerabilities are found | Post-deployment: production scans, pen tests, incidents | Pre-deployment – IDE, PR review, CI/CD pipeline gates |

| Developer context at the fix time | Zero: fix assigned weeks after code was written by a different team | Full developer fixes the vulnerability in the same sprint in which it was introduced |

| Security team workload | Escalating: triaging production vulnerabilities across an accumulating backlog | Decreasing over time – fewer production issues as shift-left matures |

| Deployment velocity | Constrained: security reviews create pre-release bottlenecks | Accelerating – automated gates remove human-gated review from the critical path |

| Compliance evidence | Manual: assembled at audit time from disparate system exports | Continuous – pipeline generates audit artifacts automatically with every build |

| Mean time to remediate | Weeks to months: production queue, prioritization, regression testing | Hours to days – same sprint, full developer context, no regression queue |

| SOC visibility into code risk | None: SOC has no view into what vulnerabilities exist in deployed code | Continuous – SOC receives live feed of known vulnerabilities in production |

The column that most surprises engineering leaders when they see it for the first time is deployment velocity. The assumption is that adding security checks slows releases. The experience of organizations that have embedded DevSecOps consistently shows the opposite: automated security gates in the pipeline remove the human-gated security review that was the actual bottleneck, and release velocity increases as a result.

Five Failures That DevSecOps Prevents, And What They Cost Without It

Each scenario below is drawn from documented enterprise security failures where the root cause was a vulnerability introduced in development and not discovered until production or later. In every case, the vulnerability was detectable at the point of code commit with standard DevSecOps tooling.

| Scenario | Without DevSecOps | With DevSecOps |

|---|---|---|

| Hardcoded API key committed to public repository | Discovered by an external researcher 11 days later. Key was live and had broad permissions. Incident response, credential rotation, forensic review, and 3 weeks of engineering time. | SAST scan flags hardcoded credentials in PR review. The developer removes before merging. Zero exposure. Fix time: 15 minutes. |

| Vulnerable open-source dependency deployed to production | Dependency added in Q1. CVE published in Q2. Pen test discovered an exploit path in Q3. Remediation completed in Q4. 9-month exposure window. | Software composition analysis flags CVE at PR review in Q2. Dependency updated before merge. Exposure window: zero days. |

| Authentication bypass in the new feature release | Feature shipped to 40,000 users. Vulnerability discovered by bug bounty researcher 3 weeks post-launch. Emergency rollback, patch, redeploy. Customer communication required. | DAST scan catches authentication flaw in staging pipeline. Feature blocked from release. Fixed in development. Zero customer exposure. |

| Container image with a critical CVE promoted to production | Image built with an unpatched base. Vulnerability not discovered until quarterly scan. 6 weeks in production before remediation. SOC had no detection rule for the exploit pattern. | Container image scanning in CI/CD blocks promotion. Base image updated. SOC notified of patched status. Exposure window: zero days. |

| Secrets exposed in application logs shipped to a third-party logging platform | Log export was running for 4 months before it was discovered in a security review. Third-party data handling policy gap. Regulatory notification required. | Secrets detection in the pipeline flags the log output pattern before deployment. Developer redirects sensitive data. No third-party exposure. |

The pattern across every scenario is identical: the vulnerability was not sophisticated. It was not a zero-day. It was the kind of finding that automated security scanning catches reliably in development pipelines, and that costs orders of magnitude more when it is instead discovered in production, by a researcher, or during an incident.

Also Read: The Breach That Happened After the “Successful” Pen Test

The ROI Model: Quantifying What the Business Case Is Built On

The ROI case for DevSecOps is not constructed from vendor claims or projected future savings. It is built from the current cost model, the actual engineering hours, sprint disruptions, and incident response cycles that the organization is already paying for every year as a consequence of finding vulnerabilities at the wrong stage.

The table below maps the cost categories that a DevSecOps program directly reduces, with conservative reduction estimates based on documented enterprise outcomes. Organizations that have implemented mature DevSecOps programs consistently report outcomes at the higher end of these ranges.

| Cost category | Without DevSecOps (annual) | With DevSecOps (annual) | Reduction |

|---|---|---|---|

| Production vulnerability remediation (engineering hours) | 1,200–2,400 hrs/yr | 200–400 hrs/yr | ~83% reduction |

| Security review bottleneck release delay cost | 8–12 delayed releases/yr | 1–2 delayed releases/yr | ~85% reduction |

| Compliance evidence assembly (audit preparation) | 300–600 hrs/yr | 40–80 hrs/yr | ~87% reduction |

| Incident response security-related deployment issues | 2–4 significant incidents/yr | 0–1 incidents/yr | ~75% reduction |

| Pen test finding remediation cycle (sprint disruption) | 1–2 disrupted sprints/yr per team | Minimal: most findings prevented upstream | ~70% reduction |

| Developer context-switching cost (late vulnerability fix) | High: fixing cold code weeks later | Low: fixing warm code in the same sprint | ~60% reduction |

How to build the number for your organization

The business case calculation for a specific organization requires four inputs, all of which are available from existing engineering and security operations data:

- Annual engineering hours spent on production vulnerability remediation: Pull from sprint retrospectives, incident tickets, and security finding closure records. This is almost always higher than engineering leadership expects when the full accounting is done.

- Number of releases delayed by security reviews in the past 12 months: Measure against planned release dates. Include the cost of the delay in terms of feature delivery, not just engineering time.

- Hours spent assembling compliance evidence at audit time: This is a direct DevSecOps ROI item; pipeline-generated artifacts eliminate most of this manually assembled work.

- Number and cost of security-related incidents in the past 24 months: Include incident response time, external consultants if used, and any regulatory or customer notification costs.

Sum those four numbers. That is the current annual cost of not having DevSecOps. The cost of implementing it, tooling, training, and pipeline integration is almost always a fraction of that number in year one, and the savings compound as the program matures and fewer vulnerabilities reach production.

Reframing the Conversation with Engineering Leadership

The most common reason DevSecOps business cases fail in front of engineering leadership is framing. When security teams present DevSecOps as a security investment, engineering leaders evaluate it against their security budget and competing security priorities. When it is presented as an engineering efficiency investment, one that reduces the most expensive and disruptive category of unplanned work in the development cycle, the evaluation framework changes entirely.

| The question engineering leadership asks | The question that answers it correctly |

|---|---|

| How much will DevSecOps cost to implement? | What is the current annual cost of fixing vulnerabilities after they reach production? |

| Will this slow down our release velocity? | How many releases are already being delayed by late-stage security reviews that we could eliminate? |

| Do our developers have time to learn security practices? | How much developer time is currently spent fixing security issues they did not introduce and have no context for? |

| Is this a security team problem or an engineering problem? | Who pays the sprint disruption cost when a pen test finding requires a hotfix? Engineering does — every time. |

| Can we phase this in gradually? | Every sprint without shift-left security is producing vulnerabilities that will cost 15–100× more to fix later. The phasing question is about how fast to reduce that cost. |

The argument that closes with engineering leadership is not about risk tolerance or compliance requirements. It is about the fact that their teams are already doing security work; they are just doing it in the most expensive, most disruptive, and least effective way possible. DevSecOps does not add that work. It moves it.

What the Implementation Actually Looks Like

One of the reasons DevSecOps business cases stall is that the implementation is presented as a large, high-risk program change. In practice, the highest-value DevSecOps capabilities are additive to existing pipelines and can be implemented incrementally, with measurable ROI at each stage.

Phase 1: Highest ROI, lowest friction: two to four weeks

Add static application security testing and software composition analysis to the existing CI/CD pipeline. Configure findings to surface in the developer’s existing pull request workflow rather than a separate security tool. Add secrets detection to prevent credential exposure in repositories. These three controls catch the majority of the vulnerability classes that generate the most expensive production findings — and they require no changes to how developers write code, only to how the pipeline reports feedback.

Phase 2: Coverage expansion: four to eight weeks

Add container image scanning to the build stage. Implement infrastructure-as-code security scanning for cloud configuration drift. Configure pipeline policy gates that block promotion of images with critical unpatched CVEs. At this stage, the pipeline is preventing an entire class of misconfiguration-related exposure that is invisible to code-level scanning.

Phase 3: Dynamic coverage and SOC integration: eight to twelve weeks

Add dynamic application security testing to the staging environment. Connect the vulnerability program output to the SOC, ensuring that every known vulnerability in production code is visible to the SOC as a detection context. Implement continuous compliance artifact generation from pipeline events. At this stage, the DevSecOps program is not just reducing development-phase risk; it is actively improving the SOC’s ability to detect exploitation of vulnerabilities that exist before they can be patched.

Phase 4: Threat-informed and developer enablement: ongoing

Integrate threat intelligence into pipeline prioritization — elevating findings that correspond to vulnerability classes currently being exploited by adversaries targeting your sector. Build developer security enablement into the engineering onboarding process. Establish metrics that make the ROI of the program visible to engineering leadership on a continuous basis: mean time to remediate, findings-by-phase distribution, and production vulnerability trend.

The implementation failure to avoid: Deploying DevSecOps tooling without integrating findings into the developer workflow. Security tools that generate reports nobody reads, or findings that land in a separate security queue rather than the developer’s pull request, reproduce the same disconnection that makes reactive security expensive. The value is in the integration, not the tooling.

Working with Prudent

At Prudent, we help engineering and security leadership teams build the DevSecOps business case from their actual cost data, not vendor benchmarks, and design the implementation architecture that delivers measurable ROI within the first quarter of deployment.

The starting point is always the same: an honest accounting of what the current security model is actually costing the engineering organization, and where in the pipeline that cost can be moved to produce the highest return. That conversation almost always changes how the investment decision is framed and how quickly it gets made.

If your organization is ready to move from reactive to shift-left security, the data you need to make the case is already in your sprint records. We can help you find it, frame it, and present it in a way that closes the argument.