The Problem with Intelligence That Arrives Too Late

In March 2024, a mid-sized logistics company received a threat advisory from its sector ISAC. The advisory described an active ransomware campaign targeting supply chain infrastructure, named the threat actor, listed the command-and-control domains being used, and described the initial access technique in detail. The advisory sat in an analyst’s inbox for four days. The analyst was triaging a backlog of 3,000 unreviewed alerts.

On day six, ransomware was deployed. The C2 domain from the advisory was in the firewall logs for five of those six days.

The organization had the intelligence. They did not have a system that could act on it. The gap between knowing and detecting is exactly the problem that integrating threat intelligence into an AI SOC is designed to close.

This is not a staffing failure. It is an architectural one. Threat intelligence delivered to a human inbox and threat intelligence ingested directly into an AI detection model are not the same thing, and the operational difference between them is often measured in whether an incident becomes a breach.

What Threat Intelligence Actually Is, And What It Is Not

Threat intelligence is specific, evidence-based knowledge about the adversaries, techniques, and infrastructure being used to target organizations like yours, right now, in your sector, against your technology stack.

That definition matters because threat intelligence is frequently confused with three things it is not:

- Not a threat feed: A raw IOC feed, a list of malicious IPs, or file hashes is raw data, not intelligence. Intelligence requires context: who is using this infrastructure, what campaign it is part of, what they do next, and why your organization is a plausible target.

- Not a vulnerability scanner: Knowing your systems have unpatched CVEs is asset risk data. Knowing that a specific threat actor is actively exploiting that CVE against your sector this week is threat intelligence.

- Not a compliance checkbox: Subscribing to a TI feed to satisfy an audit requirement and integrating that intelligence into your detection model are operationally different by an order of magnitude.

Real threat intelligence answers four questions:

- Who is likely to target us?

- How are they getting in?

- What do they do once inside?

- And how do their techniques map to our current detection coverage?

The value of threat intelligence is not in knowing that threats exist. It is in knowing which threats are active, which techniques they are using right now, and whether your detection architecture can see those techniques as they happen.

Why AI SOC Without Threat Intelligence Is Only Half a System

An AI SOC without threat intelligence is a system that can detect deviation but cannot interpret it. It knows something unusual is happening. It does not know whether that unusual thing is the first stage of a known ransomware campaign, a nation-state reconnaissance operation, or a misconfigured monitoring tool.

This distinction has direct operational consequences. An AI model that detects a behavioral anomaly and surfaces it as a generic alert creates work for an analyst. An AI model that detects the same anomaly, correlates it against active campaign TTPs, attributes it to a known threat actor targeting your sector, and surfaces it with a recommended containment action creates a decision. The difference is threat intelligence.

The three things Threat Intelligence gives an AI SOC that it cannot generate internally

- Adversary context: AI models learn from your environment’s historical data. They are excellent at detecting that something has changed. They cannot tell you who changed it, why, or what they are likely to do next; that context comes from external intelligence about the adversaries who operate in your threat landscape.

- Campaign-level correlation: Individual alerts look isolated. Threat intelligence reveals the campaign structure connecting them. The phishing email, the credential harvest, and the lateral movement three days later are events from a single playbook; TI is what connects the dots.

- Predictive anticipation: When a TI feed reports that a threat actor has begun targeting your sector, an AI SOC with integrated TI can immediately elevate its detection sensitivity for the TTPs associated with that actor, before the first indicator appears in your environment. That is not reactive detection. It is an anticipatory defense.

Behavioral AI tells you what happened. Threat intelligence tells you who did it, why it matters, and what comes next. Neither is sufficient alone. Together, they define what a mature AI SOC looks like.

What Each Type of Threat Intelligence Does Inside an AI SOC

Not all threat intelligence serves the same function inside an AI SOC. The four established categories of threat intelligence each contribute differently to the detection and response pipeline, and each has a distinct failure mode when missing.

1. Strategic intelligence covers nation-state campaigns, industry targeting trends, and geopolitical threat context. Inside an AI SOC, it informs model weighting, elevating risk scoring for TTPs associated with threat actors relevant to your sector. Without it, the SOC detects events without knowing whether they fit a broader campaign that is already underway.

2. Operational intelligence describes active campaigns, current adversary infrastructure, and the attack tooling being used right now. It feeds real-time correlation, connecting live alerts to known active campaigns rather than treating each signal in isolation. When operational intelligence is absent, alerts are answered individually, and the campaign-level pattern connecting a phishing email on Monday to lateral movement on Thursday goes unrecognized.

3. Tactical intelligence is what most organizations mean when they say “threat intel”: IPs, domains, file hashes, and URLs tied to specific threats. Its role is to enrich every alert with actor attribution and threat context at the moment of ingestion. Without it, the SOC can match and block, but cannot attribute or connect indicators to a campaign structure.

4. Technical intelligence covers malware signatures, exploit code patterns, and C2 communication protocols. It tunes the behavioral models, teaching the AI what malicious patterns look like at the technique level. Without it, models train on generic anomalies rather than technique-specific deviations and miss the behavioral signatures that distinguish a targeted intrusion from routine noise.

The most common gap in enterprise threat intelligence programs is an overemphasis on tactical intelligence IOC feeds, at the expense of operational and technical intelligence. IOC feeds provide matching capability. Operational and technical intelligence provides understanding. An AI SOC that can match IOCs but cannot correlate behaviors to campaign TTPs is missing the capability that makes threat intelligence genuinely predictive.

The Integration Architecture: How Threat Intelligence Flows into Detection

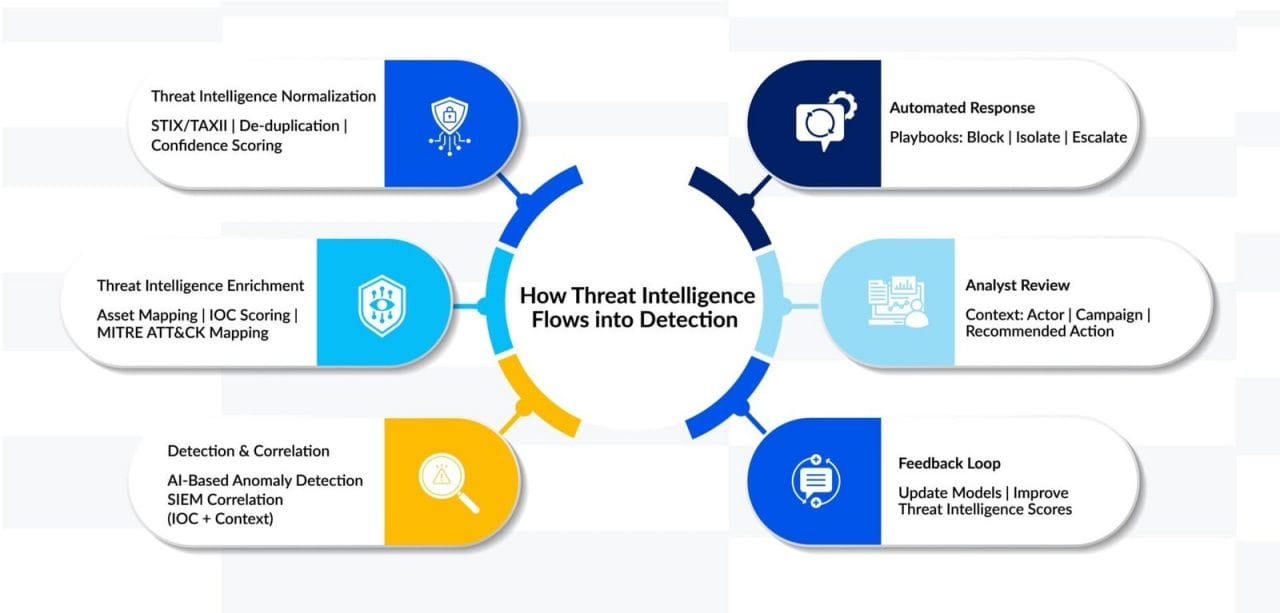

The diagram below maps how threat intelligence from external sources flows through normalization, enrichment, and integration into the AI SOC detection and response pipeline, and where closed-loop feedback returns outcomes to improve both model accuracy and TI quality over time.

Threat Intelligence Integration Architecture:

Threat Intelligence with AI vs. Threat Intelligence without AI: The operational difference

The table below captures what changes when threat intelligence is embedded inside the AI detection layer versus treated as a separate tool that analysts consult manually.

| Dimension | Threat intel without AI SOC | Threat intel inside AI SOC |

|---|---|---|

| Feed processing | Analysts manually review IOC reports. Coverage is limited by bandwidth. | Ingested, normalized, and correlated automatically across all feeds. |

| Actioning speed | Hours to days, from advisory to detection rule updates. | Sub-minute — TI enriches live alerts the moment a feed updates. |

| Context depth | IOC matched in isolation, no behavioral context layered in. | IOC + actor TTPs + asset criticality + historical behavior combined. |

| Coverage decay | Stale IOCs remain active; no automated expiry or confidence scoring. | Confidence scores decay over time; the model deprioritizes aged indicators. |

| Unknown threats | The TI-only approach has no coverage for threats with no prior intelligence. | Behavioral AI covers zero-days; TI adds actor context when it arrives. |

| Analyst load | High, TI review competes with alert triage for the same headcount. | Low — TI enrichment is automated; analysts see pre-contextualized alerts. |

Five Scenarios: The Same Threat with and without Threat Intelligence

The operational gap between a Threat Intelligence-integrated AI SOC and one operating without live threat intelligence is most visible in concrete scenarios. Each case below reflects a documented enterprise threat pattern.

| Scenario | Without Threat Intelligence as Core AI SOC Input | With Threat Intelligence Integrated as Core Input (Splunk-Enabled) |

|---|---|---|

| Known threat actor targets your sector | AI detects behavioral anomalies but lacks context. Alert is treated as isolated. Analyst investigates from scratch. | Threat intelligence correlates anomalies to active campaign TTPs. Splunk enriches the alert with actor profile, known patterns, and next steps. Recommended containment actions surfaced instantly. |

| Malicious IP begins probing the network. | IP checked against internal blocklist only. No match — no alert. Probing continues undetected. | Splunk ingests live threat intelligence feeds. IP identified as active C2 infrastructure. Automated block triggered and alert generated within seconds. |

| Phishing campaign targeting the finance team. | Behavioral model flags email anomaly without campaign context. Generic alert issued with limited priority. | Threat intelligence links email to an active credential-harvesting campaign. Splunk correlates indicators, identifies an impersonated brand, and escalates an alert with full campaign context. |

| Ransomware group publishes new TTPs | New techniques, not detection rules. The SOC remains unaware until an incident occurs. | Threat intelligence feed updates in real time. Splunk adapts to detections dynamically. AI flags behaviors aligned with the new TTP chain, zero lag between advisory and detection. |

| An insider exfiltrates data using a known tool. | The behavioral model sees tool usage as normal. No context that the tool is being abused. | Threat intelligence identifies tool association with data exfiltration campaigns. Splunk combines behavior + context, generating high-confidence alerts within hours. |

The pattern across every scenario is the same: without TI integration, the AI SOC detects events. With TI integration, it detects events with meaning, and that meaning is what converts an alert into a decision.

The Seven-Step Operationalization Framework

Integrating threat intelligence into an AI SOC is not a configuration task. It is a program, and it requires the same architectural discipline as any other security capability. The framework below maps the seven phases that distinguish a functioning TI-integrated AI SOC from one where TI is technically present but operationally inert.

| Phase | Action | What the AI SOC gains | Common failure without this |

|---|---|---|---|

| Source | Select and onboard TI feeds: commercial, ISAC, open-source, government advisories. Prioritize feeds relevant to your sector and region. | Relevant, current adversary context for your specific threat exposure | Generic intel not calibrated to the organization’s actual exposure |

| Normalize | Standardize all feeds to a common format (STIX/TAXII or equivalent). Strip duplicate IOCs, resolve conflicting confidence scores. | Clean, deduplicated intel the AI can ingest without noise or contradiction | Conflicting feeds produce false positives that erode analyst trust |

| Enrich | Map IOCs to asset inventory. Score each indicator by confidence, recency, and relevance to crown-jewel systems. | Risk-weighted alerts — high-value assets + high-confidence TI = top priority | All IOC matches are treated equally, regardless of asset criticality |

| Integrate | Push live TI into the SIEM, EDR, and AI detection layer. Automate feed refresh cycles. Connect TI to playbook triggers. | TI enrichment happens at detection time, not after the fact | TI remains in a separate platform — analysts pull it manually post-alert |

| Correlate | Enable the AI model to cross-reference alerts against active campaign TTPs. Map detected behaviors to MITRE ATT&CK. | Every alert surfaces actor context, campaign affiliation, and next-step prediction | Alerts answered in isolation; lateral campaign spread not anticipated |

| Validate | Run closed-loop feedback: confirmed incidents update model weights. False positives from stale TI trigger feed review. | Detection accuracy compounds — model improves from every TI-correlated outcome | TI quality never improves; stale feeds continue degrading alert fidelity |

| Govern | Set TI expiry policies. Assign feed ownership. Review Intel relevance quarterly against your actual incident history. | TI program stays calibrated to real threats, not legacy advisory subscriptions. | Feed subscriptions accumulate; program drifts from operational relevance. |

Most common failure point: Phase 4 – integration. Organizations complete sourcing, normalization, and enrichment, but keep TI in a separate platform. Analysts pull it manually after an alert. At that point, TI is a reference tool, not a detection input, and its value collapses to near zero in time-sensitive incidents.

Where Threat Intelligence Programs Fail Operationally

Most organizations have some form of threat intelligence. Very few have operationalized it in a way that makes their AI SOC materially more effective. The gap between subscribing to TI and extracting value from it is consistent across enterprises, and the failure modes are predictable.

The Five Failure Patterns

1. Threat Intelligence as a separate platform – The most common failure. Intelligence sits in a dedicated TI platform and is consulted by analysts post-alert. It never touches the detection model. The AI SOC operates blindly to the current campaign context, while the TI platform holds the answers.

2. IOC-only programs – Organizations equate threat intelligence with IOC feeds. They block known-malicious IPs and domains, but have no operational or technical intel integrated into behavioral detection. The AI model cannot correlate behaviors to actor TTPs because it has never been given that context.

3. Feed accumulation without governance – TI subscriptions accumulate over time with no review of relevance or quality. Stale feeds introduce noise. Conflicting confidence scores from different providers produce inconsistent alert prioritization. The AI model degrades because its enrichment layer is polluted.

4. No closed-loop feedback – Confirmed incidents and reviewed false positives are not fed back into the TI program. Feed quality never improves. Stale IOCs remain active. The model does not learn from outcomes. The TI program exists at a static quality level indefinitely.

5. Sector mismatch – Generic commercial feeds not calibrated to the organization’s sector, geography, or technology stack. The AI SOC receives high volumes of irrelevant IOCs, generating noise that erodes analyst confidence in the system. Relevant, targeted intelligence from ISACs or sector-specific providers is absent.

Diagnostic Questions for Security Leadership

Before investing in additional threat intelligence subscriptions or AI detection tooling, security leaders need an honest view of how their current threat intelligence program interfaces with their detection architecture. These questions are designed to surface the operational gaps that budget conversations consistently obscure.

On integration

- When a new IOC is published in your threat intelligence feed, how long does it take to reach your AI detection model, minutes, hours, or does it require a manual update?

- Is your threat intelligence platform integrated directly into your SIEM and AI detection layer, or do analysts consult it separately after alerts are raised?

- When the last major ransomware advisory was issued for your sector, how long did it take your SOC to act on it?

On coverage

- Are your threat intelligence feeds calibrated to your sector and geography, or are they generic commercial feeds that may not reflect your actual threat of exposure?

- Do you have operational threat intelligence, active campaign context, actor TTPs, or primarily tactical TI in the form of IOC lists?

- Is your AI detection model tuned to the techniques of the threat actors most likely to target your organization, or is it trained only on generic anomaly patterns?

On governance

- Who owns the threat intelligence program, and is that person accountable for detection outcomes, or only for feed subscriptions?

- When did you last review whether your threat intelligence feed subscriptions reflect your actual current threat exposure, and retire feeds that no longer do?

- Does your closed-loop feedback process exist on paper or in practice, confirming incidents, updating your model weights, and threat intelligence confidence scores?

If these questions reveal that TI is technically present but not operationally integrated, the program is providing the appearance of intelligence without delivering its value. That gap is not a subscription problem. It is an architecture problem.

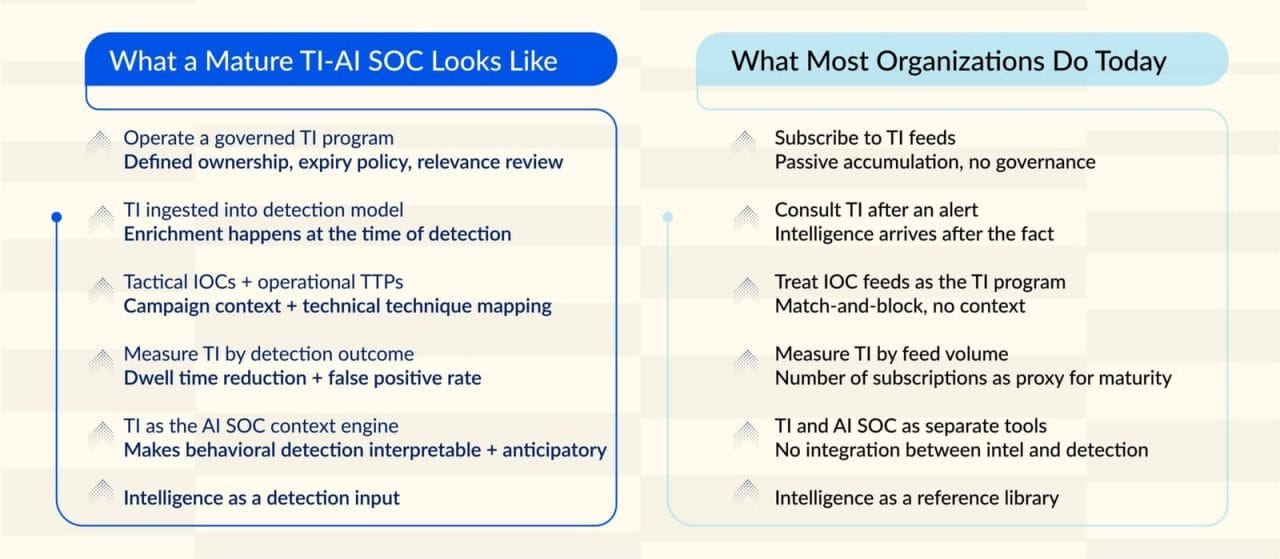

What Mature Looks Like and How to Get There

A mature TI-integrated AI SOC does not look like an organization with more subscriptions. It looks like an organization whose AI detection model already knows, when the first anomaly appears, whether that anomaly fits the current threat actor playbook targeting its sector, and what that actor’s next three moves are likely to be.

That capability is not a product. It is the result of an integration discipline that most organizations have not applied to their TI program.

The Posture Shift Required

The Practical Starting Point

The organizations that extract full value from their AI SOC investment are not the ones with the most TI subscriptions. They are the ones that have closed the gap between intelligence and detection, where TI is not a tab that analysts open after an alert, but the context layer that tells the AI what it is actually looking at before the alert is even raised.

That shift is architectural. It does not require replacing your existing stack. It requires connecting the intelligence you already have directly into the detection model that acts on it and governing that connection, so it stays calibrated to your actual threat exposure, not your legacy subscription list.

Most enterprises are closer to that posture than they realize. The gap is rarely a missing tool. It is an unbuilt integration and an unowned governance process.

At Prudent, we work with security teams to close exactly that gap, mapping current TI program maturity, identifying where intelligence stops short of the detection layer, and building the integration and governance architecture that makes an AI SOC operationally complete.

If your organization is ready to move from a TI program that references intelligence to one that acts on it, the starting point is a conversation about where your current architecture leaves you exposed.

The organizations that extract full value from their AI SOC investment are the ones that treat threat intelligence not as a reference library, but as the context engine that tells the AI what it is looking at. That shift is architectural. And it is available to any enterprise willing to make it.