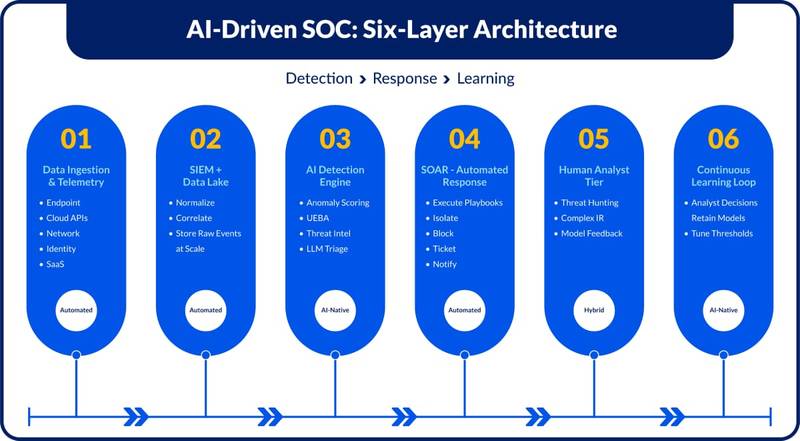

Architecture Overview

An AI-driven operating model has six interconnected layers, each handling a distinct function in the detection and response lifecycle.

The architectural premise: automate high-volume, low-ambiguity work at machine speed. Concentrates human attention on what genuinely requires.

Each layer produces structured output that feeds the next.

Detection and containment can complete a full automated cycle before an analyst receives a notification. The human tier handles ambiguous investigations, novel threat patterns, and decisions with business or legal consequences.

Layer 01–02: Telemetry and the Data Foundation

Every downstream AI capability is bounded by the quality and completeness of the data it receives. Telemetry coverage is the most foundational architectural decision in SOC modernization and the most frequently underinvested one.

A mature AI-native SOC ingests across the full attack surface:

- EDR agents on every managed endpoint

- Firewall, proxy, and DNS logs

- Cloud provider API trails – AWS CloudTrail, Azure Activity Log, GCP Audit

- Identity and access events – Okta, Azure AD, CyberArk

- SaaS application logs via CASB connectors

- Deception assets – honeypots and canary tokens for high-fidelity early warning

Schema-on-read vs. schema-on-write

- Data loss for any fields outside the predefined schema

- Slow, painful onboarding whenever a new log source was added

- Brittle pipelines that broke when vendors changed log formats

Modern data lakes, such as Google Chronicle, Microsoft Sentinel, and Elastic Security, ingest raw events and normalize at query time instead. The Open Cybersecurity Schema Framework (OCSF) provides a vendor-neutral taxonomy that makes cross-source normalization consistent.

This paves the way to:

- Faster source onboarding

- Full-fidelity event retention

- Ability to run retrospective queries when new detection logic is developed.

Establish formal telemetry coverage targets before deploying ML detection. Gaps in visibility cannot be compensated for by model sophistication. 100% coverage of Crown Jewels assets is the minimum floor.

Layer 03: The AI Detection Engine

The detection engine runs four capabilities in parallel, each targeting a different threat class. The combined output is a continuous, enriched risk picture across every entity in the environment and not a queue of binary alerts.

Statistical anomaly detection

Statistical models are built on per-entity baselines across users, hosts, applications, and network endpoints. Deviations produce risk scores, not standalone alerts. Those scores feed a broader risk of aggregation rather than firing in isolation.

Detection signals include:

- Impossible travel – authentication from two distant locations within minutes

- Unusual outbound ports on a server that has never used them

- Processes spawning unexpected child trees

Common implementations use time-series analysis for volume anomalies, isolation forests for behavioral outliers, and k-means clustering for peer group deviations. The key design choice is output format: a continuous score that aggregates with other signals produces far fewer spurious alerts than a binary threshold.

User and Entity Behavior Analytics

Where anomaly detection flags individual data points, UEBA identifies patterns that only become significant in sequence.

An after-hours login is a low signal. The same login followed by bulk file enumeration, an archive operation, and an upload to an external domain is a high-confidence exfiltration pattern – even if no individual event crossed a threshold.

Implementation notes:

- Typically built on RNNs or transformer architectures, learning temporal patterns across long lookback windows

- Requires 6-12 months of baseline telemetry before producing reliable output

- Deployments that skip the baseline period see high false positive rates early, eroding analyst trust before the feedback loop has a chance to stabilize.

Threat intelligence enrichment

Simultaneously with behavioral analysis, every observable extracted from events, including IPs, domains, file hashes, and certificate fingerprints, is correlated against threat feeds in near real-time.

| Commercial feeds | Recorded Future, CrowdStrike Falcon Intelligence |

|---|---|

| Open-source | VirusTotal, AlienVault OTX, MISP instances |

| Sector-specific | ISACs (Information Sharing & AnalysisCenterss) |

| Lookup method | In-memory for high-frequency; async API for lower-frequency observables |

By the time an alert surfaces to an analyst, its indicators have already been cross-referenced. What previously required 10–15 minutes of manual enrichment per alert is handled automatically. The analyst opens a case that has already been investigated at the IOC level.

LLM-assisted triage

The LLM layer sits downstream of detection and enrichment. When an incident fires, the model assembles a full context with raw events, UEBA scores, IOC findings, asset ownership from CMDB, recent user access history, and produces a structured summary covering:

What occurred and the event sequence

- Why is it suspicious, and what does the risk score reflect the likely attacker’s objective

- Implicated MITRE ATT&CK techniques

LLM-generated summaries also create a consistent, auditable record of why each alert was actioned, directly relevant for NIS2, DORA, and SEC disclosure requirements.

MITRE ATT&CK coverage

| Tactic | Technique | AI Detection Signal |

|---|---|---|

| Initial Access | Spearphishing link (T1566.002) | Email anomaly + click on unusual domain |

| Privilege Escalation | Valid accounts (T1078) | UEBA: atypical access time and scope |

| Lateral Movement | Pass-the-hash (T1550.002) | Same credentials reused across multiple hosts in rapid succession |

| Exfiltration | Exfil over web service (T1041) | Volume anomaly + threat intel hit on destination domain |

| Impact | Data encrypted (T1486) | Mass file extension changes + shadow copy deletion |

The detection engine does not produce a list of alerts. It produces a continuously updated risk picture across every entity in the environment.

Layer 04: Automated Response

SOAR executes structured response playbooks when detection confidence crosses a defined threshold. That threshold is risk-calibrated. The confidence required to trigger an action scales with the potential business impact of that action.

| Low-impact actions (lower confidence threshold) | Alert enrichment, ticket creation, asset owner notification – auto-executed |

|---|---|

| High-impact actions (higher threshold or approval) | Endpoint isolation, account suspension, firewall rule insertion – requires higher confidence or explicit analyst sign-off |

Integration depth determines value

The SOAR layer’s value is proportional to how deeply it integrates with the toolstack. Shallow integrations that only create tickets return marginal value. Deep, bidirectional integrations with EDR platforms, identity providers, firewall APIs, and CMDB systems are what enable the containment speed that defines an AI-native SOC.

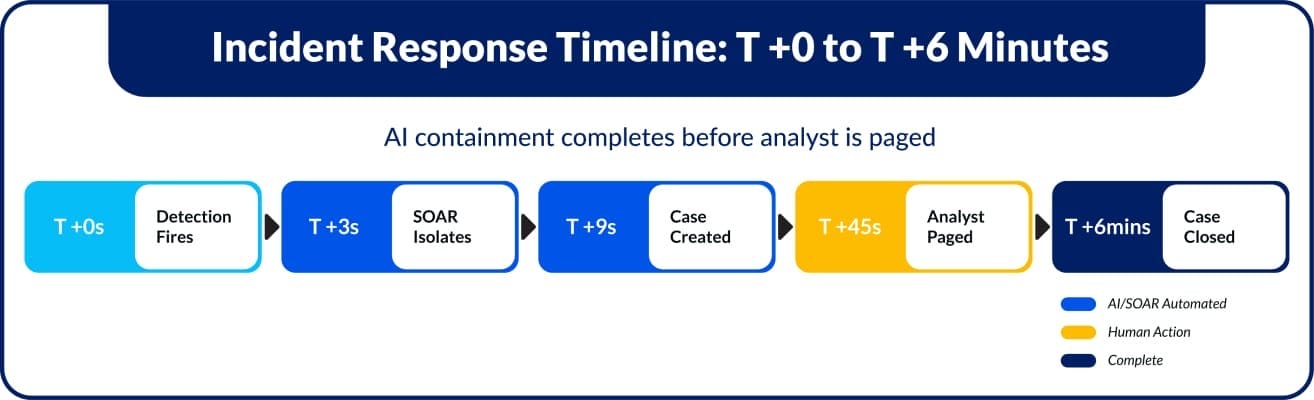

Response lifecycle

Automated response lifecycle for a high-potential lateral movement detection.

T + 0s

Detection fires at 94% confidence

Lateral movement: credential reuse across 3 hosts in 90 seconds, unusual process spawning on a domain controller.

T + 3s

SOAR executes containment playbook

Endpoint isolated via EDR. User account suspended in Okta. Active sessions revoked. Firewall rule blocks the source IP.

T + 9s

Enrichment and case creation

Asset owner pulled from CMDB. IOCs checked against threat feeds. LLM generates an incident summary. Ticket created in Service Now.

T + 45s

On-call analyst paged

Incident already contained. Analyst role: confirm response, identify initial access vector, assess blast radius.

T + 6min

Case closed with analyst feedback

Confirmed true positive. Access path documented. Correlated alerts marked – signals enter training pipelines.

Detection, isolation, enrichment, and documentation are completed in under 10 seconds for high-confidence detection. The analyst’s first action is review and confirmation.

Automated containment actions carry business risk. A playbook that incorrectly isolates a production server causes operational disruption. Approval workflows, impact classifications, and quarterly playbook reviews are operational necessities.

Layer 05: The Human Analyst Tier

In a well-calibrated AI SOC, the human tier handles what automation can’t. Not because the technology is immature, but because some decisions require contextual judgment, adversarial intuition, and accountability that no current system provides.

Tier 1 triage largely disappears as a primary function. That capacity shifts to:

- Threat hunting – proactively querying the data lake for undetected compromise

- Complex incident response – multi-stage attacks, supply chain intrusions, nation-state activity

- Detection engineering – improving model coverage based on emerging TTP intelligence

- Risk communication – translating technical findings into business-risk language for leadership

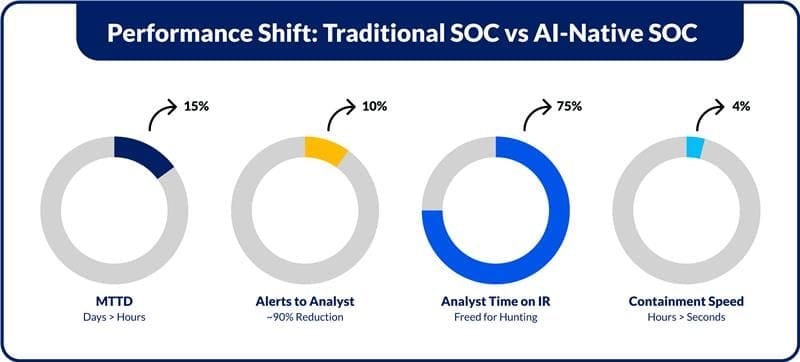

Performance Shift: Traditional SOC vs AI-Native SOC

Threat hunting at depth

With triage handled automatically, analyst time opens up for proactive hunting. This includes:

- Living-off-the-land techniques that blend with normal administrative behavior

- Novel malware families without known signatures

- Attacker dwell patterns that fall just below anomaly detection thresholds

This work requires deep knowledge of attacker TTPs and OS internals. It is not something the current generation of AI models replicates.

Complex incident response

Multi-stage attacks and nation-state intrusions require human-directed investigation. The AI layer compresses time to context,xt, where the analyst arrives with enriched data, correlated events, and an LLM-generated summary already assembled.

What remains human:

- Forensic reasoning

- Acquisition sequencing

- Interpretation of ambiguous artefacts

- Escalation decisions

- Communication to legal and executive stakeholders

Layer 06: The Continuous Learning Loop

The feedback loop is what separates a static automation platform from a system that improves over time. Every analyst’s decision generates a labeled data point that feeds back into model retraining:

- Confirmed true positive – weights that event sequence more heavily in future scoring

- False positive correction – teaches the model to discount that signal combination in this environment

- Investigation notes – improve LLM summary quality for similar future case types

The feedback loop is the mechanism through which the system earns the right to operate with more autonomy over time.

Architectural Risks and How They Manifest

Each layer introduces specific risks when misconfigured or underinvested. Addressing them at the architecture level is structurally more effective than responding reactively.

| Telemetry gaps | Incomplete coverage creates a blind spot that no model compensates for. The risk is that entire threat categories are going undetected. Formal coverage targets and regular coverage audits are the controls. |

|---|---|

| UEBA without baseline | Deploying UEBA before sufficient baseline data exists floods early operation with false positives. The model has no reference point for normal. Analyst distrust follows, damaging the feedback loop before it functions. |

| Model drift | Detection models degrade silently as attacker TTPs evolve. Precision falls gradually with no visible failure event. Continuous retraining pipelines and scheduled model evaluation against labeled datasets are the controls. |

| Shallow SOAR integration | SOAR that only creates tickets returns minimal value over a well-configured SIEM. Containment speed depends on deep integrations with EDR, IdP, and network control planes. Evaluate integration depth before vendor selection. |

| Ungoverned playbooks | Automated actions affecting production systems require governance. A playbook that isolates a production database without approval creates disruption. Impact classifications, approval workflows, and rollback procedures must be defined before high-impact automation goes live. |

| Explainability gaps | Black-box detections create compliance risk. NIS2, DORA, and SEC rules require documented, explainable detection rationale. LIME, SHAP, or attention-based architectures that surface contributing signals address this at the model level. |

What to Evaluate Now

Security teams are still operating on architectures designed to observe, correlate, and respond in sequence while attackers operate in parallel, at machine speed.

This creates a structural gap that no amount of tooling can close. Three capabilities are at different maturity points. Security Architects should treat them accordingly.

If your SOC:

- Detects threats in minutes instead of seconds,

- Depends on analysts to validate every decision,

- Cannot correlate identity, endpoint, and network signals in real time. Then it is already operating behind the attacker.

This indicates it’s time for you to evaluate where your architecture is slowing you down.

An AI-driven SOC is an operating model that improves continuously as long as the architecture is designed to learn, and the team is structured to let it.