Enterprise AI drives financial decisions, customer operations, and automated workflows at scale rather than being experimental. Yet the security frameworks most organizations rely on were designed for deterministic software systems that behave predictably, fail in limited ways, and can be assessed at a point in time. AI systems do not fit that model.

According to a recent survey by Gartner, 29% of cybersecurity leaders reported that their organizations have experienced attacks targeting enterprise GenAI application infrastructure in the past 12 months.

If nearly one-third of organizations are already facing attacks, it would be more appropriate to ask whether the remaining two-thirds are secure or haven’t looked close enough to find them.

Because AI-driven attacks are often mild so that it’s embedded in outputs and decision flows rather than system breakdowns.

And if you are not explicitly testing that behavior, you are simply assuming risk and not actually measuring it.

What AI Penetration Testing Actually Evaluates

AI penetration testing evaluates how AI systems behave under adversarial conditions, not when the software is susceptible to vulnerabilities. It examines model inputs and outputs, data pipelines, integration points, and component interactions that standard application testing cannot reach.

How It Differs from Traditional Penetration Testing

| Dimension | Traditional Pen Testing | AI Penetration Testing |

|---|---|---|

| Primary focus | Code flaws, CVEs | System behavior under adversarial conditions |

| Testing model | Static, point-in-time | Dynamic, matched to system evolution |

| Scope | App, network, endpoint | Model, data pipeline, API, integrations |

| Failure mode | System breaks when exploited | System functions but produces harmful outputs |

AI penetration testing does not replace traditional security testing. It addresses the risk categories that traditional methods were never designed to detect.

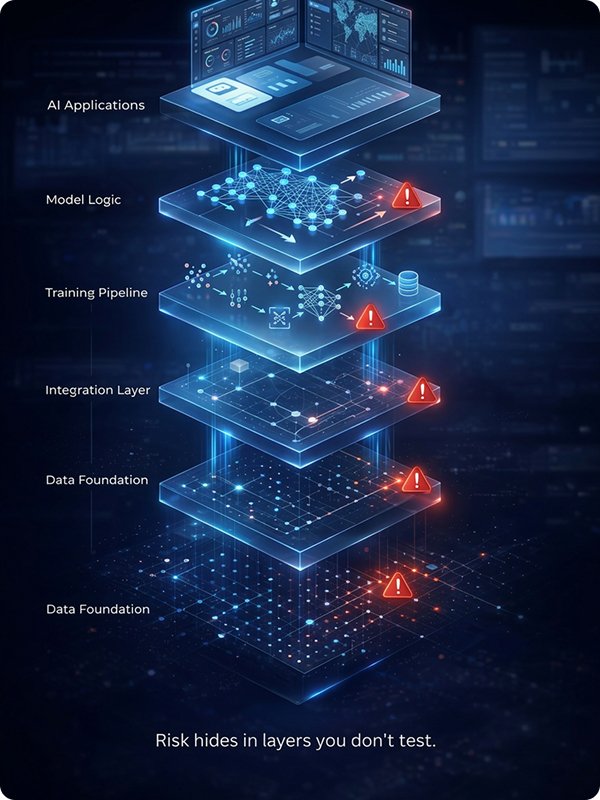

Where Risk Actually Enters

- Prompt injection: Overriding model constraints through manipulated inputs

- Model evasion: Inputs crafted to bypass detection or classification logic

- Data poisoning: Corrupting training data to alter model behavior at scale

- Inference attacks: Extracting sensitive training data from model responses

The Expanding Attack Surface of Enterprise AI

AI risk frequently originates in layers that security teams rarely test: the model logic, training pipeline, and the integration layer connecting AI outputs to downstream decisions.

Why Traditional Testing Falls Short

Static Testing in Dynamic Systems

With the arrival of new data, AI models get retrained. Integrations expand. A test completed at deployment can have near-zero predictive value a few months later. Ongoing risk in systems that evolve continuously cannot be captured by point-in-time assessments

Behavioral Failures vs. Technical Vulnerabilities

Traditional testing finds broken systems. AI risk lives in systems that function correctly but can produce harmful outcomes under specific conditions that generate no error logs, trigger no intrusion alerts, and appear in no vulnerability scan report.

Why Standard Scanning Misses AI Risk

A classification model trained on poorly governed data can pass every technical security check and still produce systematically skewed outputs in production with no indication of failure in any standard test result.

AI Vulnerability Assessment

Building a Risk Baseline

Effective penetration testing requires knowing where risk exists first. AI vulnerability assessment establishes that baseline before testing begins. Without it, testing is resource-intensive and unlikely to surface the most significant exposures.

What a Rigorous Assessment Covers

Assessment defines the scope and priority of what gets tested.

AI Red Teaming

Simulating Real-World Adversaries

Where vulnerability assessment maps exposure and penetration testing validates exploitability, AI red teaming simulates how a motivated adversary would engage with the system over time and what outcomes that engagement could produce.

What AI Red Teaming Involves

| Activity | What It Tests | Why It Matters |

|---|---|---|

| Multi-turn adversarial prompting | Model consistency under sustained pressure | Reveals behavioral drift across interactions |

| Cross-system attack chaining | How exploits propagate through integrations | Surfaces risks invisible in isolated tests |

| Data pipeline integrity attacks | Training data manipulation susceptibility | Evaluates the model’s foundational security |

Red Teaming in Practice

A customer-facing AI assistant may operate within constraints under normal use yet disclose sensitive data or bypass policy guardrails when guided through a structured adversarial sequence. Red teaming finds these scenarios before adversaries do.

From Periodic Testing to Continuous Security Validation

Why Timing Is the Biggest Gap

Annual or quarterly tests generate snapshots of a moving target. By the time findings are remediated and the system retested, the AI may have been retrained, expanded, or integrated with systems that did not exist at the original assessment.

| Validation Model | Frequency | Primary Limitation |

|---|---|---|

| Periodic penetration testing | Annual / quarterly | Exposure between cycles is unmonitored |

| Expanded scope assessment | Semi-annual | Still a snapshot; misses change-driven risk |

| Continuous AI validation | Ongoing, ops-integrated | Requires process and tooling investment |

What CISOs and Security Leaders Must Act On Now

The challenge is not acquiring new tools. It is establishing an accurate view of actual risk exposure distinguishing between assurance grounded in evidence and confidence grounded in the absence of known incidents.

Diagnostic Questions for Security Leadership

1. When were your AI systems last tested for behavioral vulnerabilities and not code-level flaws?

2. Is AI security validation embedded in your model update lifecycle, or treated as a periodic compliance event?

3. Do you have visibility into shadow AI i.e. unsanctioned tools employees use with sensitive data?

4. Have you mapped which AI systems would produce the highest-consequence outcomes if compromised?

The Shift Required

From ‘Did we test our systems?’ to ‘Do we understand how our systems behave under conditions we did not design for?’

| What Enterprises Do Today | What AI Security Requires |

|---|---|

| Assume security after testing | Continuously validate behavior |

| Test code and infrastructure | Test model behavior and outputs |

| Treat security as a checkpoint | Treat security as a lifecycle |

| Periodic audits and fixes | Embedded in AI development cycle |

What Security Architects Must Build Next

Three structural priorities define what needs to be built in AI-integrated enterprises.

- Integrate validation into the AI development lifecycle; security at every stage, not post-deployment

- Layer disciplines; assessment, penetration testing, red teaming, and continuous monitoring in sequence

- Evaluate end-to-end behavior; trace risk across the full data-to-decision pathway, not components in isolation

Looking Ahead: A More Rigorous Standard for AI-Era Security

AI systems now influence decisions with real financial, operational, and reputational impact like Wrong credit decisions , Fraud detection bypass , Data leakage via chatbot , Automated workflows executing malicious actions etc. All of this making their security a continuous responsibility rather than a one-time validation exercise. A credible approach in 2026 starts with understanding where risks exist, validating what is truly exploitable, and evaluating how systems behave under realistic adversarial conditions.

Security, in this context, is not a checkpoint; it is an ongoing discipline.

The standard for AI security is no longer defined by what was tested at deployment, but by how well an organization understands system behavior over time. Enterprises that shift toward continuous visibility—rather than periodic assurance—will be better positioned to manage evolving risk. If your current approach cannot clearly answer how your AI systems perform under unexpected conditions, it may be time to explore a more structured approach to security testing aligned with how modern AI systems actually operate.